- Blog

- Speech-to-text in production: what 36 years of har...

Mar 31, 2026 | Read time 6 min

Speech-to-text in production: what 36 years of hard lessons taught me

The founder who built speech recognition in 1989 on latency, turn detection and faulty pipelines

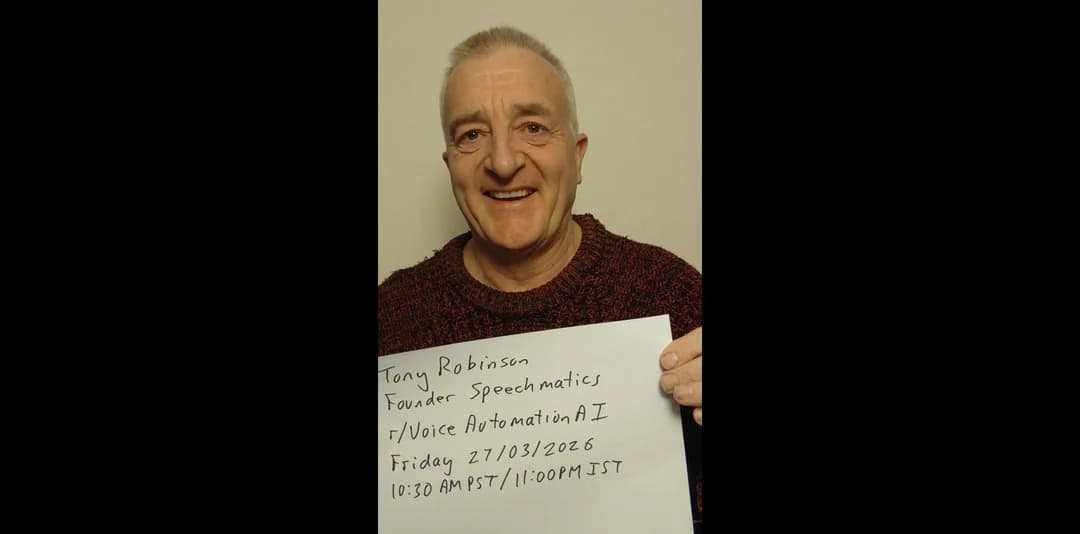

Dr Tony Robinson began his PhD research into speech recognition at Cambridge in 1985 and built one of the first neural network-based systems four years later.

He founded Speechmatics, shaped a generation of Cambridge researchers who went on to build the Voice AI infrastructure at Microsoft, Amazon and Apple, and has spent four decades on one problem: Voice AI works in demos and breaks in production.

Last week he answered 24 hours-worth of questions from builders on Reddit.

Here are some of his top insights.

Where does a speech-to-text pipeline actually break down?

Automatic speech recognition has crossed a threshold. In Tony's framing, it is now more like software than machine learning. You can plug it in and it works for a wide range of use cases. The accuracy is there. The barrier to entry is low.

The problem is everything else. In a real-time STT to LLM to TTS pipeline, the speech-to-text component is rarely where things go wrong. The LLM is. In his own testing, the fun conversations last until the LLM hits a loop and just becomes wrong. Getting reliable, fact-checkable language model output is the unsolved layer of Voice AI in 2026.

What the industry actually needs, Tony argues, is not better LLMs but provably correct ones: systems where the model is doing nothing more than enabling search of a verified facts database, with fact-checking built into the output.

A wrong answer delivered in a natural, human-sounding voice can be more dangerous because people may trust it too much. The accountability problem has got worse as the voice quality has got better.

See how the leading platforms compare

Hard-won lessons from production are one thing — choosing the right platform is another. Here's how the leading speech-to-text APIs stack up in 2026.

Why does latency spike in real-time Voice AI pipelines?

The one-second rule has held across decades of voice interface design. If the user does not get a reply within one second of finishing their turn, they lose faith in the system. Go over it and the interaction degrades, regardless of how accurate the transcription is.

The implication for pipeline design is stark. A cascaded system is only as fast as its slowest component. One delay anywhere in the chain and the answer arrives late, which in Voice AI is effectively a wrong answer.

Any latency introduced at the ASR stage is unrecoverable. But Tony also flags an opposite failure mode: a response that arrives unnaturally fast puts people off too. That one, at least, is easy to fix.

Will speech-to-speech models replace cascaded pipelines?

One of the most substantive debates in Voice AI is whether modular, cascaded pipelines will eventually be superseded by end-to-end speech-to-speech models that skip text as an intermediate representation entirely. Tony has held a clear position on this for decades.

He pushes back on the word "cascaded" as a misnomer first. A real production pipeline is not a simple sequential hand-off. You can interrupt the TTS. There is metadata beyond text. Components can run in parallel. The architecture is more fluid than the name implies.

His case for text in the middle comes down to factoring. If you can break a problem into separable components, you can debug each one, optimize each section independently, and train different parts on different datasets. You also get accountability: text is something you can inspect, verify, and parse into SQL queries or structured workflows in a way raw audio simply cannot be.

Speech-to-speech will win eventually, he concedes. But the first organization to get there will need to train on the full internet, have built a credible world model, and have a data center or two spare. The modular approach is not a compromise in the meantime. For many use cases, it is the better architecture on its own terms.

Why is turn detection in Voice AI still unsolved?

Ask any developer shipping a real-time Voice AI product what keeps them up at night, and turn detection appears quickly. When has a speaker finished their turn? The naive answer: when they stop talking. The real answer is considerably harder.

Tony has been working on this since 1989, and is candid that it still has a long way to go. A slow speaker might pause mid-thought. A filler word like "umm" signals continuation, not completion. A second voice in the room introduces ambiguity. The ASR pipeline has to have completed, and the LLM pipeline may already be in motion, by the time a turn-continuation signal arrives. If "umm" comes in at the half-second mark, the system has to invalidate partial results, discard intermediate state, and restore state. In real time.

For many production use cases, the current state of the art is good enough. But you can always break it if you try hard enough, and the question for builders is whether it holds in the specific context they are deploying into.

On whether Voice AI should be able to interrupt the user: yes, Tony says, and frames it as a world model problem. If the system has enough context to know the user is heading somewhere incorrect, it should be able to redirect. Most current LLMs are too nice. A system with a genuinely good model of the user could interrupt appropriately. Not there yet.

What is the most underused feature in Voice AI?

Tony's answer is immediate: speaker diarization. The ability to distinguish between different speakers in an audio stream is treated as a secondary concern in many speech-to-text implementations. It should not be.

In a meeting or multi-party conversation, feeding speaker-tagged transcripts into an LLM changes what the model can do. Who said what, and when, is often as important as what was said. One wrong speaker assignment can change the meaning of the conversation entirely. Diarization is an ASR problem, not something a better LLM will fix, and passing clean speaker labels downstream unlocks substantially richer analysis.

Barge-in detection, the ability to handle a user interrupting the system mid-response, is a different kind of problem: a pipeline problem, and not a particularly hard one. If the ASR detects new audio, the TTS should shut down. With the right signalling architecture, it can shut down faster still. Solvable.

Why is speech recognition still worse for low-resource languages?

Speech recognition still has real challenges in low-resource languages, but Tony's position is that the barrier is economic rather than architectural.

The Speechmatics model shares parameters across all languages. When the deep learning architecture improves, every language improves. The problem is that the money to build and deploy these systems has historically concentrated where English is spoken, which has priced parts of the world out.

That is shifting. Speech-to-text costs are falling and volumes are growing. The languages seeing fastest adoption are moving beyond the English-speaking world already.

For communities with low literacy rates, voice interfaces carry an access advantage that text-based systems cannot offer. When the tipping point comes for any given language, Tony says, it tends to come fast. Predicting exactly when is the hard part.

What are the biggest mistakes when building a Voice AI startup?

The honest answer is not about technology. It is about timing.

He was working in speech recognition when he met Geoff Hinton in late 1985. His connectionist speech recognition work in the 1990s was technically ahead of its time. He started a company to distribute music over dial-up internet.

Speechmatics has been running for 20 years, and for the first several of those years ASR was not good enough to sell as a startup product. It took Siri before the market understood that speech recognition actually worked.

"Don't do things before the market is ready for them," Tony says, "but have them done when the market is ready."

The distinction matters. Being early is often indistinguishable from being wrong. The skill is building during the quiet period so you can move when the window opens.

What are the best use cases for speech-to-text?

Late in the AMA, Tony was asked whether Voice AI would become the dominant human-machine interface, or whether brain-machine interfaces would eventually take over. He said he hoped neither would dominate.

Speech is faster than typing. More natural. Better suited to devices too small for a keyboard, situations where hands are occupied, and any context where frictionless input matters. But it is not universal. In a room full of people, you might reasonably prefer the soft keyboard. Neuralink has its first happy customer, Tony notes, but he is personally never going to want a neural implant.

Dr Tony Robinson is founder of Speechmatics, which provides speech-to-text, speaker diarization and real-time transcription across 55+ languages. Get building with Speechmatics today via our Portal.

Related Articles

- CompanyApr 21, 2026

Adobe and Speechmatics deliver cloud-grade speech recognition on-device for Premiere

SpeechmaticsEditorial Team - ProductMar 5, 2026

Your voice agent speaks perfect Arabic. That's the problem.

Yahia AbazaSenior Product Manger - TechnicalMay 27, 2025

Your AI assistant keeps cutting you off. I'm fixing that.

Aaron NgMachine Learning Engineer

Latest Articles

De-risk your voice agent: The 11 best voice agent testing platforms in 2026

Voice agents that pass in demos routinely fail in production. This guide covers the 11 best voice agent testing platforms in 2026, with the Five-Layer Testing Framework, platform deep dives, open-source alternatives, and a decision guide by maturity stage.

How to build a microbatching workflow with the Speechmatics API

Build a cleaner path between batch and real time. Learn when micro-batching makes sense, how to chunk audio, submit jobs, stitch JSON, and scale safely with the Speechmatics API.

Alphanumeric speech recognition: why voice assistants mangle SKUs (and how to fix it)

A guide for voice AI engineers, ecommerce platforms and warehouse teams on SKU recognition accuracy voice assistant deployments depend on: why speech recognition systems produce transcription errors on product codes, what to measure when error rates matter, and the fixes that move the needle on order picking, voice ordering and customer-facing voice AI.

The Adobe story: How we made cloud-grade AI work on your laptop

Behind the build: what it takes to make cloud-grade speech recognition work inside Adobe Premiere, and why Whisper raised the stakes.

Adobe and Speechmatics deliver cloud-grade speech recognition on-device for Premiere

Adobe Premiere users can run the most accurate on-device transcription locally; efficient enough for a laptop, powerful enough for professional work.

AI can now understand health signals from 15 seconds of your voice, including fatigue, stress and type 2 diabetes

The joint platform returns transcription and health signals in real time, with no additional hardware required.