- Blog

- Accuracy Matters When Using GPT-4 and ChatGPT for...

Mar 21, 2023 | Read time 9 min

Accuracy Matters When Using GPT-4 and ChatGPT for Downstream Tasks

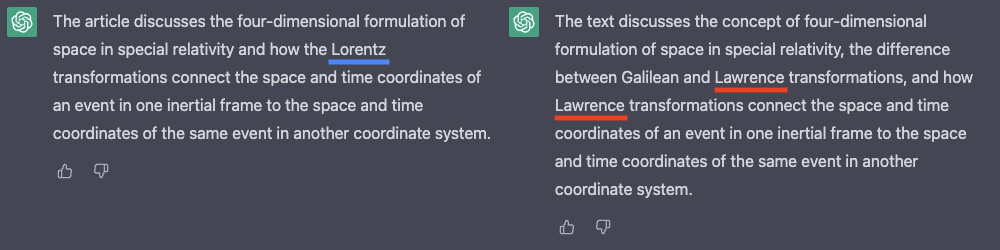

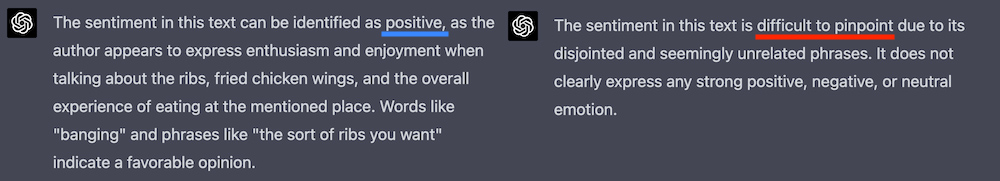

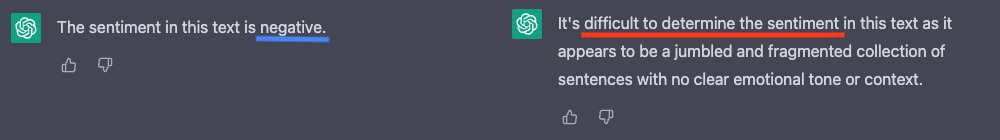

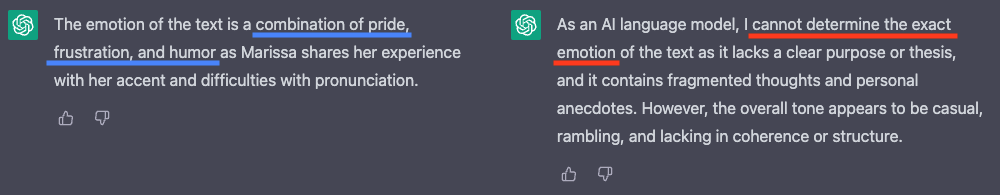

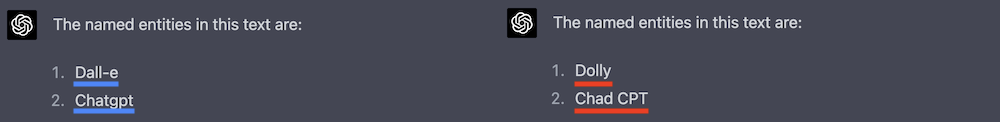

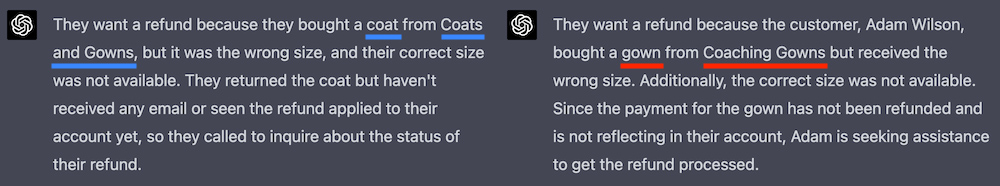

By combining the output of an automatic speech recognition (ASR) system with a large language model such as GPT-4 and ChatGPT, we can do many downstream tasks in a zero-shot way by including the transcript and task in the prompt.

Ana OlssenMachine Learning Engineer

| Footnotes | * The full transcript was provided in the prompt for these videos. |

| References | [1] Brown, Tom, et al. "Language models are few-shot learners." Advances in neural information processing systems 33 (2020): 1877-1901. [2] Open AI, "Introducing ChatGPT" OpenAI, 30 Nov, 2022. Accessed 13 Mar, 2023. [3] Stiennon, Nisan, et al. "Learning to summarize with human feedback" Advances in Neural Information Processing Systems 33 (2020): 3008-3021. |

| Author | Ana Olssen |

| Acknowledgements | Benedetta Cevoli, John Hughes, Liam Steadman |

Related Articles

- TechnicalMar 7, 2023

Introducing Ursa from Speechmatics

John HughesAccuracy Team LeadWill WilliamsChief Technology Officer - TechnicalMar 9, 2023

Achieving Accessibility Through Incredible Accuracy with Ursa

Benedetta CevoliSenior Machine Learning Engineer - TechnicalUpdated: Mar 23, 2026

GPT-4: How Does It Work? Architecture, Training & Capabilities Explained

John HughesAccuracy Team LeadLawrence AtkinsMachine Learning Engineer

Technical

How to build a microbatching workflow with the Speechmatics API

SpeechmaticsEditorial Team

Product

Alphanumeric speech recognition: why voice assistants mangle SKUs (and how to fix it)

SpeechmaticsEditorial Team

Technical

The Adobe story: How we made cloud-grade AI work on your laptop

Andrew InnesChief Architect

Company

Adobe and Speechmatics deliver cloud-grade speech recognition on-device for Premiere

SpeechmaticsEditorial Team

Use Cases

Best speech-to-text AI guide: APIs, platforms and services compared

Tom YoungDigital Specialist

News

AI can now understand health signals from 15 seconds of your voice, including fatigue, stress and type 2 diabetes

SpeechmaticsEditorial Team