Going global has never been easier

Going global has never been easier

Reach the largest audience possible - in real-time - without the hassle of multiple different language APIs and lengthy setup times.

Speed up processes

A single, and powerful, API enables higher capacity, allowing you to display captions faster and focus on revisions.

We speak your language

We speak your language

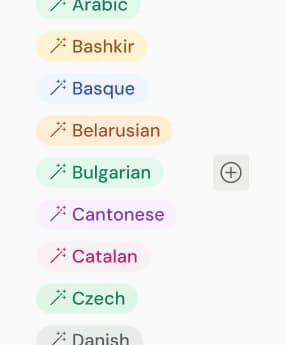

Speechmatics' AI-driven speech technology powers transcription, translation and understanding in 50 languages.

Language | Transcription | Translation |

|---|---|---|

German | ||

Greek | ||

Hindi | ||

Hungarian | ||

Interlingua | ||

Irish | ||

Italian | ||

Indonesian | ||

Japanese | ||

Korean | ||

Latvian | ||

Lithuanian | ||

Malay | ||

Maltese | ||

Mandarin | ||

Marathi | ||

Mongolian |

Built for a multilingual world

Trained to understand diverse accents, dialects, and code-switching - ideal for global enterprises and multilingual conversations.

Multilingual support for Spanish, Mandarin, Tamil and more.

Customizable for local vocab & terminology.

Comprehensive features, simple set up.

Comprehensive features, simple set up.

We've done the heavy lifting, so you don’t have to. Packed with features to give you access to global customer bases and audiences, without the headaches of lengthy setup and configuration.

Consistent. Reliable. Unmatched.

Consistent. Reliable. Unmatched.

Speechmatics delivers high accuracy transcription even on languages that other vendors like Google struggle with.

Our stats stack up.

Our stats stack up.

Lower word error rate compared to Google (on German to English).

Better quality French to English than Google (as measured by COMET score).

Better quality for ASR + Translation for German, Swedish, and Japanese (as measured by COMET score).

Supporting transcription across 50+ languages, opening up a world of new opportunities.

Inclusivity – good for everyone, no matter the use case.

Inclusivity – good for everyone, no matter the use case.

Make your content work harder to a larger audience & increase customer satisfaction. Whatever your industry, it's win after win after win after...

Localize Media Content With Speechmatics

Our ASR supports over half the world’s population with its language coverage. Let your customer’s media reach as wide an audience as possible, regardless of the spoken language.

Build Inclusive Classrooms For Everyone

Speech translation encourages diversity and inclusion in education, as well as ensuring your services remain compliant with international laws and standards.

Revolutionize Contact Centers with Speech

Don’t let language and dialect barriers hold you back. Extend your offering with contact center solutions to cover diverse customer bases, without compromising quality of service.

The proof is in the pudding. Or budino. Or मिठाई.

Test results are great, but nothing beats a live demo. Below is a live stream of four international radio broadcasts, with live transcription and translation both shown, in real-time.

The science behind the magic

The science behind the magic

At Speechmatics, our technical breakthroughs in Self Supervised Learning (SSL) give you game-changing results. Both today, and in the future.

High accuracy across languages

Our bar is high for quality, and our underlying technology ensures we hit that bar across every supported language.

Dialects and accents all included

We ensure that our accuracy remains for dialects, accents, and in different environments. With Speechmatics, inclusivity is built in.

New languages added with ease

Our SSL technology means we move can add new languages, fast. We added Persian within 4 weeks. Need a language we don’t current offer? Get in touch.

Constantly improving, always evolving

We accomplished a 40% decrease in transcription error for Norwegian - getting this right takes machine learning excellence, quality data, and language expertise.