- Blog

- You can’t hurry love, but you can hurry final tran...

Jan 21, 2026 | Read time 4 min

You can’t hurry love, but you can hurry final transcripts

Introducing 250ms final transcripts for Voice AI

TL;DR

For responsive Voice AI, you need transcripts straight away. Once speech has finished, send us the new

ForceEndOfUtterancemessage and we’ll do the rest.Turn Detection \to\ Send

ForceEndOfUtterance\to\ Receive Final Transcript (~250ms)Trigger the message using your own client-side logic, or handle the entire pipeline automatically with our new Voice SDK, Pipecat integration, and upcoming LiveKit update.

Introduction

In the world of voice agents, the "awkward silence" is the enemy.

We all know the feeling. You finish asking a voice assistant a question.

And then you wait.

…

That gap…

Between you finishing your sentence…

And the AI responding…

Is where the magic of conversational AI lives or dies.

For us at Speechmatics, solving this isn't just about raw processing speed (though we have that). It’s having the confidence in when to send you the finals.

We are introducing Forced End of Utterance (FEOU) to let you tell us when you want them.

The philosophy is simple: If you are happy that speech is over, then so are we.

The Problem: The Transcription Waiting Game

To understand why this feature matters, we have to look at how transcription works under the hood. Real-time transcription engines typically output two things:

Partials: Low-latency, evolving best guesses of what is being said. (e.g., "I want two...")

Finals: Highest-confidence, punctuated, stable text. (e.g., "I want to go to the park.")

Most systems (voice agents, scribes, etc.) wait for the accurate "Finals" before spending LLM tokens with text that could continue to update. The problem? To generate "Finals", standard engines hold back for a fixed period of silence to ensure the user has truly finished speaking.

Traditionally, the engine plays it safe. It waits for a preset amount of silence or a "Max Delay" timer to expire before locking in the text. It needs to ensure that the silence after "Two..." isn't just a pause before "...hundred."

For captioning, this ensures the highest accuracy. For a conversational voice agent, that buffer feels like an eternity.

The Solution: Force End of Utterance (FEOU)

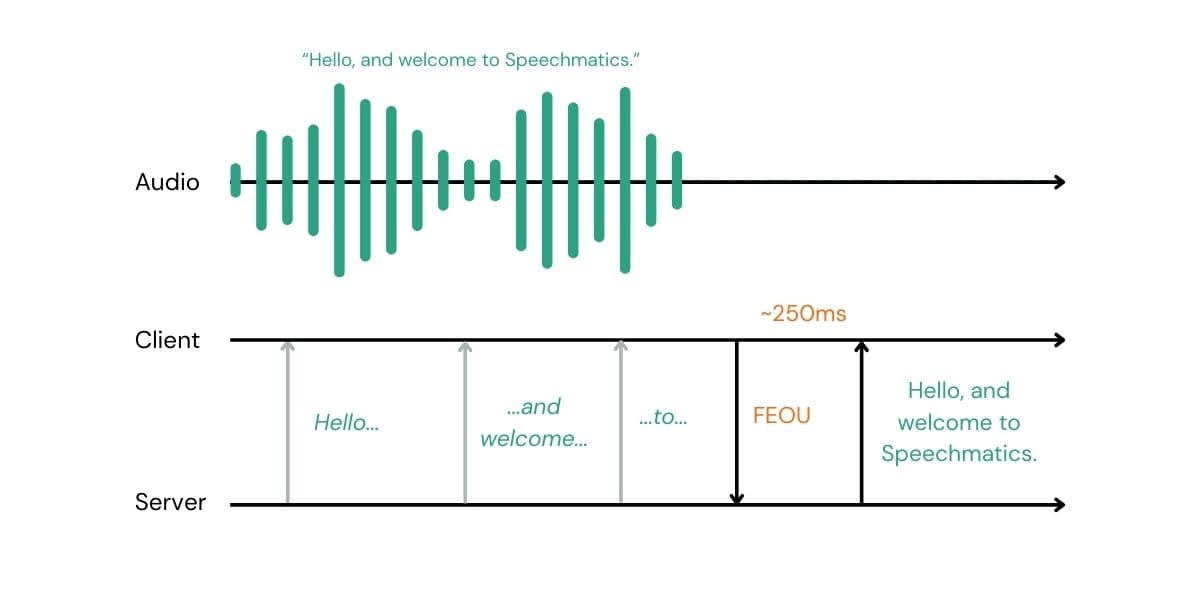

With the new ForceEndOfUtterance message, we have decoupled "Turn Detection" from "Transcription”. Your client can send a ForceEndOfUtterance message at the right moment forcing the current segment to close and immediately emitting a final transcript - ready to be passed straight to the LLM.

If you have a Voice Activity Detector (VAD), a "Push-to-Talk" button, or a sophisticated multimodal model that predicts when a user has finished speaking, you can now immediately signal our engine.

When we receive an FEOU message, we stop waiting. We immediately process all the audio in our buffer, apply punctuation, finalize the transcript, and send it back to you.

The triple lock of latency

With this release, you now have three distinct levers to pull to optimize your agent's responsiveness:

1. The Manual Override: ForceEndOfUtterance

This is the new power feature. You send a JSON message to the websocket, and we finalize immediately.

Best for: Sophisticated voice agents using client-side VAD, push-to-talk interfaces whatever you can dream up.

Benefit: Lowest possible latency. You define the rules.~

2. The Safety Net: end_of_utterance_silence_trigger

This is a server-side setting. You tell us: "If you hear X milliseconds without speech, send an EndOfUtterance (EOU) message."

Best for: A backup to ensure turns are eventually closed if the client-side signal fails.

Recommendation: Keep this lower than

max_delay(e.g., 0.5s - 0.8s).

3. The Backstop: max_delay

The classic setting. A consistent delay before finalizing transcription; the longer it is the more audio context the engine is given before having to finalize.

Best for: Ensuring text eventually appears on screen during long monologues or dictation.

Recommendation: 1s - 2s for conversational agents.

Using ForceEndOfUtterance

The easiest way to start bringing your end-of-turn latency down is to use our new Python Voice SDK. It brings together loads of helpful features for voice AI. It can handle the entire turn detection pipeline for you. It includes the open source Silero voice activity detector and the Pipecat turn detection model (Smart Turn V3) to detect an end of turn and send the FEOU message.

Alternatively, in both our real-time (RT) and Voice SDKs, you can use your own logic to finalize using:

This will send back an EndOfUtterance message. For a complete, runnable example of how to implement this logic, check out our Voice Agent Turn Detection tutorial on GitHub.

Our new Pipecat integration and upcoming LiveKit update out of the box will use their internal turn detection to automatically trigger the finalization for you.

Start building now with our integration section in the Speechmatics academy.

Let's talk numbers

250ms: The New Standard for Finals

From the moment your client sends the ForceEndOfUtterance signal, you can expect to receive a final transcript in approximately 250ms (network dependent).

To understand why this is significant, we need to look at the total latency equation.

The Old Equation (Standard Engines) In a traditional cloud STT setup, you are at the mercy of the server’s safety settings.

Latency = Server Silencer Buffer + Processing Time

Most engines enforce a silence buffer of 700ms–1000ms. You pay this "waiting tax" on every single turn to ensure accuracy, regardless of the context.

The New Equation (FEOU) With FEOU, we remove the server-side wait entirely.

Latency = Your Turn Detection + Speechmatics Processing (250ms)

This puts the latency budget entirely in your hands.

Your Turn Detection: You decide the logic. VAD or Smart Turn: You can even send us the signal pre-emptively, so that once the turn is complete the transcript is ready.

Push-to-Talk: If you use a hardware button, this is effectively 0ms. The moment the user releases the button, we begin finalization.

Our Processing: We handle the heavy lifting of finalizing text and formatting punctuation in that ~250ms window.

Conclusion

Don’t wait to reduce your latency.

By using ForceEndOfUtterance, you get the best of both worlds: the unparalleled accuracy of Speechmatics' transcription, with the snappy, real-time responsiveness required for modern voice agents. Try it out for yourself in the Speechmatics Academy.

If your system is confident the user is done, don't wait for us.

Force the finals.

Related Articles

- Use CasesUpdated: Mar 10, 2026

Best TTS APIs in 2026: ElevenLabs, Google, AWS & 9 More Compared

Tom YoungDigital Specialist - CompanyJan 7, 2026

Speechmatics in 2025: The numbers that shaped Voice AI's breakthrough year (+ what’s to come in 2026)

Lauren KingChief Marketing Officer - CompanyJan 20, 2025

AI can speak. But has it forgotten to listen?

Stuart WoodProduct Manager

Latest Articles

De-risk your voice agent: The 11 best voice agent testing platforms in 2026

Voice agents that pass in demos routinely fail in production. This guide covers the 11 best voice agent testing platforms in 2026, with the Five-Layer Testing Framework, platform deep dives, open-source alternatives, and a decision guide by maturity stage.

How to build a microbatching workflow with the Speechmatics API

Build a cleaner path between batch and real time. Learn when micro-batching makes sense, how to chunk audio, submit jobs, stitch JSON, and scale safely with the Speechmatics API.

Alphanumeric speech recognition: why voice assistants mangle SKUs (and how to fix it)

A guide for voice AI engineers, ecommerce platforms and warehouse teams on SKU recognition accuracy voice assistant deployments depend on: why speech recognition systems produce transcription errors on product codes, what to measure when error rates matter, and the fixes that move the needle on order picking, voice ordering and customer-facing voice AI.

The Adobe story: How we made cloud-grade AI work on your laptop

Behind the build: what it takes to make cloud-grade speech recognition work inside Adobe Premiere, and why Whisper raised the stakes.

Adobe and Speechmatics deliver cloud-grade speech recognition on-device for Premiere

Adobe Premiere users can run the most accurate on-device transcription locally; efficient enough for a laptop, powerful enough for professional work.

AI can now understand health signals from 15 seconds of your voice, including fatigue, stress and type 2 diabetes

The joint platform returns transcription and health signals in real time, with no additional hardware required.