- Blog

- Real-World Examples of Speech and Voice Recognitio...

Updated: Mar 23, 2026 | Read time 6 min

Real-World Examples of Speech and Voice Recognition Technology

Speech recognition technology is embedded in nearly every industry — from healthcare and banking to defence and media. Here are ten real-world examples of how it works, and why accuracy is everything.

What is speech recognition technology?

Speech recognition technology is software that converts spoken language into text or commands in real time. It uses acoustic models, language models, and increasingly deep learning to identify words, accents, and context from audio input. Also referred to as voice recognition or automatic speech recognition (ASR), the technology powers everything from voice agents and medical transcription to live captioning and fighter jet control systems.

How does speech recognition technology work?

Modern speech recognition systems follow three core steps.

First, the audio input is captured and cleaned — background noise is filtered and the raw sound wave is broken into small segments for analysis.

Second, an acoustic model maps those audio segments to phonemes — the building blocks of spoken language. This is where accents, dialects, and speaking pace create the most variability, and where the quality of the underlying model determines accuracy.

Third, a language model predicts which words and phrases are most likely given the context — so "two" versus "too" versus "to" is resolved not just by sound but by what makes sense in the sentence.

Modern ASR systems layer deep learning across all three steps, continuously improving as they process more audio. The best systems handle multiple languages, heavy accents, technical vocabulary, and noisy environments without degradation in accuracy — which matters enormously in high-stakes settings like healthcare, legal, and live broadcast.

Speech recognition technology is the hub of millions of homes worldwide – devices that listen to your voice and carry out a subsequent command. You may think that technology doesn’t extend much further, but you might want to grab a ladder – this hole is a deep one.

The technology within speech recognition software goes beyond what most of us know. Speech-to-text, such as Speechmatics’ Autonomous Speech Recognition (ASR), stretches its influence across society. This article will dive into seven examples of speech recognition and areas where speech-to-text technology makes a valuable difference.

1) AI medical scribes and clinical documentation

Doctors in both the US and UK spend an average of two hours on administrative tasks for every hour of direct patient care. Speech recognition technology is changing that.

Ambient AI scribes listen passively to patient-doctor conversations, automatically generating structured clinical notes and syncing them directly to electronic health records — without the clinician typing a single word. A 2025 University of Pennsylvania study found that physicians using ambient AI scribes spent 20% less time on documentation per appointment and reduced after-hours work by 30%.

Beyond documentation, speech recognition powers symptom triage systems that help patients identify whether they need urgent care, freeing clinical staff for higher-value work.

In healthcare, accuracy isn't a nice-to-have — a misheard drug name or incorrect dosage in a clinical note can have serious consequences. This makes the quality of the underlying speech recognition engine a patient safety consideration, not just a technical one.

2) Contact center automation and call analytics

Contact centres handle millions of customer interactions every day. Speech recognition technology is transforming how those interactions are captured, analysed, and acted on.

In real time, ASR systems transcribe calls as they happen — flagging keywords, detecting customer sentiment, and surfacing relevant information to agents without them needing to search. After the call, speech analytics platforms process transcripts at scale to identify trends, compliance risks, and training opportunities that would be impossible to find through manual review.

Major financial services firms, telecoms providers, and utilities companies now process hundreds of thousands of calls per month through automated transcription pipelines. The business case is straightforward: faster resolution times, lower handling costs, and a complete, searchable record of every customer interaction.

Accuracy is critical here too. A misidentified keyword in a compliance-sensitive call — a financial advice conversation, for example — can create regulatory risk. Contact centers operating in regulated industries require ASR systems that perform consistently across accents, background noise, and rapid speech.

3) Legal transcription and courtroom documentation

Courts, law firms, and legal service providers generate enormous volumes of spoken content — hearings, depositions, client consultations, and tribunal proceedings — all of which require accurate written records.

Traditionally, this relied entirely on human court reporters and legal transcriptionists. Speech recognition technology is now handling an increasing share of this work, transcribing proceedings in real time and producing structured transcripts that legal teams can search, annotate, and reference.

The stakes are high. A transcript that misidentifies a witness statement, drops a key phrase, or confuses two speakers can have serious legal consequences. This is why legal transcription demands ASR systems with exceptional accuracy across different accents, technical legal vocabulary, and multi-speaker environments — capabilities that generic consumer voice tools cannot reliably deliver.

In the UK, His Majesty's Courts and Tribunals Service has been piloting digital recording and transcription across court venues as part of a broader modernisation programme. In the US, federal and state courts are increasingly evaluating automated transcription to address the ongoing court reporter shortage.

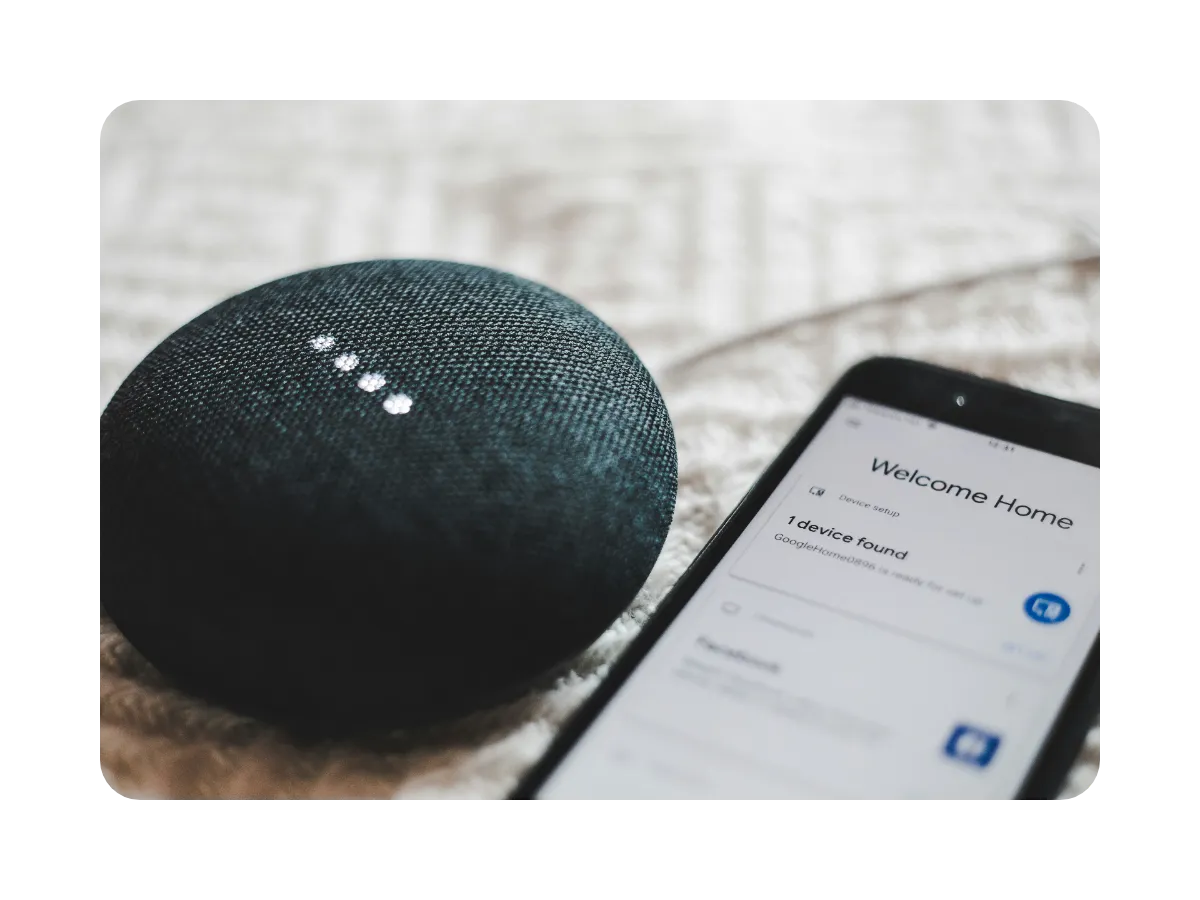

4) Voice assistants and smart home control

Voice assistants are now embedded in billions of devices worldwide — smartphones, smart speakers, televisions, thermostats, and home security systems. According to Statista, the number of voice assistant interactions is projected to reach 8 billion by 2026, up from 4.2 billion in 2020.

The appeal is straightforward: speech recognition removes the need for a physical interface entirely. Lights, heating, music, shopping, reminders, and home security can all be controlled through natural conversation — without unlocking a phone or finding a remote.

Beyond convenience, voice control is transformative for people with mobility impairments or visual disabilities, for whom physical interfaces can be a significant barrier. Speech recognition technology makes the home environment genuinely accessible in a way that touchscreens and keyboards cannot.

The underlying challenge for smart home devices is performance in noisy, everyday environments — competing audio sources, background conversation, and varied accents all create conditions where lower-quality ASR systems degrade quickly. Consistent performance across real-world conditions is what separates the best voice assistant experiences from the frustrating ones.

5) Automotive voice control and driver safety

Modern vehicles are among the most sophisticated environments for speech recognition technology. From navigation and media control to hands-free calling and vehicle diagnostics, voice commands now handle functions that previously required a driver to take their eyes off the road.

Apple CarPlay, Android Auto, and manufacturer-native systems like BMW's Intelligent Personal Assistant and Mercedes-Benz's MBUX all rely on speech recognition to interpret natural language commands in real time. Drivers can set destinations, send messages, adjust climate controls, and access vehicle information without touching a screen.

The safety implications are significant. The National Highway Traffic Safety Administration (NHTSA) estimates that distracted driving — including manual interaction with in-vehicle systems — contributes to thousands of road fatalities annually. Voice control directly reduces that risk by keeping hands on the wheel and eyes on the road.

Automotive ASR operates in one of the most acoustically challenging environments imaginable — road noise, wind, music, and passenger conversation all compete with the driver's voice. Systems that perform accurately in these conditions require robust noise cancellation and speaker isolation capabilities built into the speech recognition engine itself.

6) Meeting intelligence and workplace productivity

The modern workplace generates more spoken content than ever — video calls, hybrid meetings, client briefings, and internal standups all produce valuable information that traditionally disappeared the moment the call ended.

Speech recognition technology has transformed how organizations capture and use that content. Meeting transcription tools now automatically convert spoken conversations into searchable transcripts, action items, and summaries — in real time, without a human note-taker in the room.

Platforms like Microsoft Teams, Zoom, and Google Meet all offer native transcription powered by ASR. Beyond basic transcription, a new generation of meeting intelligence tools layer on speaker identification, topic tagging, sentiment analysis, and automatic follow-up generation — turning every meeting into a structured, actionable record.

For global organizations operating across time zones and languages, this is particularly valuable. A Tokyo team can review a verbatim transcript of a London meeting, with speaker attribution and key decisions clearly flagged, without anyone having to write up notes manually.

The accuracy of the underlying ASR directly affects the usefulness of these outputs. A transcript with frequent errors requires heavy manual correction — eliminating much of the time saving the tool was supposed to deliver.

7) Defense and military operations

Fighter jets are among the most demanding environments for speech recognition technology — and among the most consequential. The RAF's Eurofighter Typhoon uses voice command systems to control an array of cockpit functions, allowing pilots to operate critical systems without removing their hands from the controls. In combat conditions, that hands-free capability is not a convenience — it is a tactical advantage.

Beyond the cockpit, speech recognition supports military operations at every level. Soldiers use voice-activated systems to access mission briefings, consult maps, and transmit encrypted communications in the field. Command centers use automated transcription to maintain real-time logs of operational communications without manual documentation.

The performance requirements in defence applications are extreme. Systems must function accurately in high-noise environments — engine roar, wind, radio static — under stress, and with specialized military vocabulary that general-purpose ASR models are not trained to handle. Latency is also critical: a delayed response to a voice command in a fast jet is not acceptable.

This makes defense one of the most exacting test cases for speech recognition technology — and one where the gap between adequate and excellent ASR has real operational consequences.

8) Live broadcast captioning and media distribution

Broadcasting organizations have a legal obligation to caption their content — and the volume of programming they produce makes manual captioning impractical at scale. Speech recognition technology is now the backbone of live captioning for news broadcasts, sports coverage, and live events worldwide.

ASR systems transcribe spoken audio in real time, generating captions with low enough latency to appear synchronized with the broadcast. For live programming — where there is no opportunity to review or correct before transmission — accuracy is non-negotiable. A captioning error on a live news broadcast is visible to every viewer simultaneously and cannot be recalled.

Beyond compliance, automatic captioning enables broadcasters to make their entire content archive accessible and searchable — opening up new workflows for content discovery, rights management, and multilingual distribution.

The specific demands of broadcast captioning — real-time performance, technical vocabulary, multiple speakers, and live acoustic environments — require ASR systems built for professional media workflows rather than general consumer use. Word error rate and latency are the two metrics that matter most, and both need to perform consistently across every program, every day.

9) Voice search and digital accessibility

Search behavior has shifted fundamentally over the past decade. Voice search now accounts for a significant share of all search queries globally, driven by the ubiquity of voice assistants on mobile devices and smart speakers.

This shift is visible across service industries too. Someone planning a house move, for instance, might use voice search to compare removal costs on the go - driving traffic to comparison platforms like Moving Compared from users who'd never type out the same query.

For users with visual impairments, motor disabilities, or literacy challenges, voice search is not a convenience feature — it is the primary way they interact with digital content. Speech recognition technology removes the keyboard as a barrier to accessing information, services, and communication tools.

Screen readers, voice-activated browsers, and dictation software all rely on accurate ASR to function effectively. When the underlying speech recognition fails — mishearing a search query, misidentifying a command, or dropping words — the impact falls disproportionately on the users who depend on it most.

For organizations building accessible digital products, the choice of speech recognition engine directly affects whether their accessibility commitments are genuine or performative. High accuracy across accents, dialects, and non-standard speech patterns is the benchmark that matters.

10) Education and language learning

Speech recognition technology is reshaping how people learn — both in classrooms and independently. Language learning platforms use ASR to evaluate pronunciation in real time, giving learners immediate feedback on accuracy that a human tutor could not provide at scale.

Platforms like Duolingo use speech recognition to assess spoken responses, while university language departments are integrating voice-based assessment tools that reduce marking burden and provide more consistent evaluation across large student cohorts.

Beyond language learning, speech recognition supports students with dyslexia, ADHD, and other learning differences by enabling dictation as an alternative to typing — removing a significant barrier to written expression. Real-time transcription of lectures also benefits students who process information better through reading than listening, or who need to review content after the fact.

For educational institutions, the accuracy of ASR across different age groups, non-native accents, and varying speaking speeds is the critical performance requirement — children and non-native speakers are consistently underserved by models trained predominantly on adult native speech.

Speech Recognition Is Everywhere

In this day and age, you’ll be hard-pressed to find an area of your life not influenced by speech recognition technology. The scale is colossal, as while you tell Apple CarPlay to reply to your partner’s message, a doctor is shifting through their transcribed notes, and a fighter pilot is telling their plane to lock onto a target.

Of course, there are still many challenges – the technology is far from perfect – but the benefits are there for all to see. We at Speechmatics will continue to ensure the world reaps ASR’s potential rewards.

Related Articles

- TechnicalMar 7, 2023

Introducing Ursa from Speechmatics

John HughesAccuracy Team LeadWill WilliamsChief Technology Officer - ProductNov 17, 2023

Transforming the spoken word into written chapters

Rohan SarinProduct Manager (ML) - TechnicalMar 9, 2023

Achieving Accessibility Through Incredible Accuracy with Ursa

Benedetta CevoliSenior Machine Learning Engineer

Latest Articles

Better than Whisper: how Adobe Premiere's on-device speech engine got rebuilt

Quantization was the key to fitting a cloud-grade model on a laptop. Getting the full optimization chain to cooperate around it was the hard part.

The Adobe story: How we made cloud-grade AI work on your laptop

Behind the build: what it takes to make cloud-grade speech recognition work inside Adobe Premiere, and why Whisper raised the stakes.

De-risk your voice agent: The 11 best voice agent testing platforms in 2026

Voice agents that pass in demos routinely fail in production. This guide covers the 11 best voice agent testing platforms in 2026, with the Five-Layer Testing Framework, platform deep dives, open-source alternatives, and a decision guide by maturity stage.

How to build a microbatching workflow with the Speechmatics API

Build a cleaner path between batch and real time. Learn when micro-batching makes sense, how to chunk audio, submit jobs, stitch JSON, and scale safely with the Speechmatics API.

Alphanumeric speech recognition: why voice assistants mangle SKUs (and how to fix it)

A guide for voice AI engineers, ecommerce platforms and warehouse teams on SKU recognition accuracy voice assistant deployments depend on: why speech recognition systems produce transcription errors on product codes, what to measure when error rates matter, and the fixes that move the needle on order picking, voice ordering and customer-facing voice AI.

Adobe and Speechmatics deliver cloud-grade speech recognition on-device for Premiere

Adobe Premiere users can run the most accurate on-device transcription locally; efficient enough for a laptop, powerful enough for professional work.